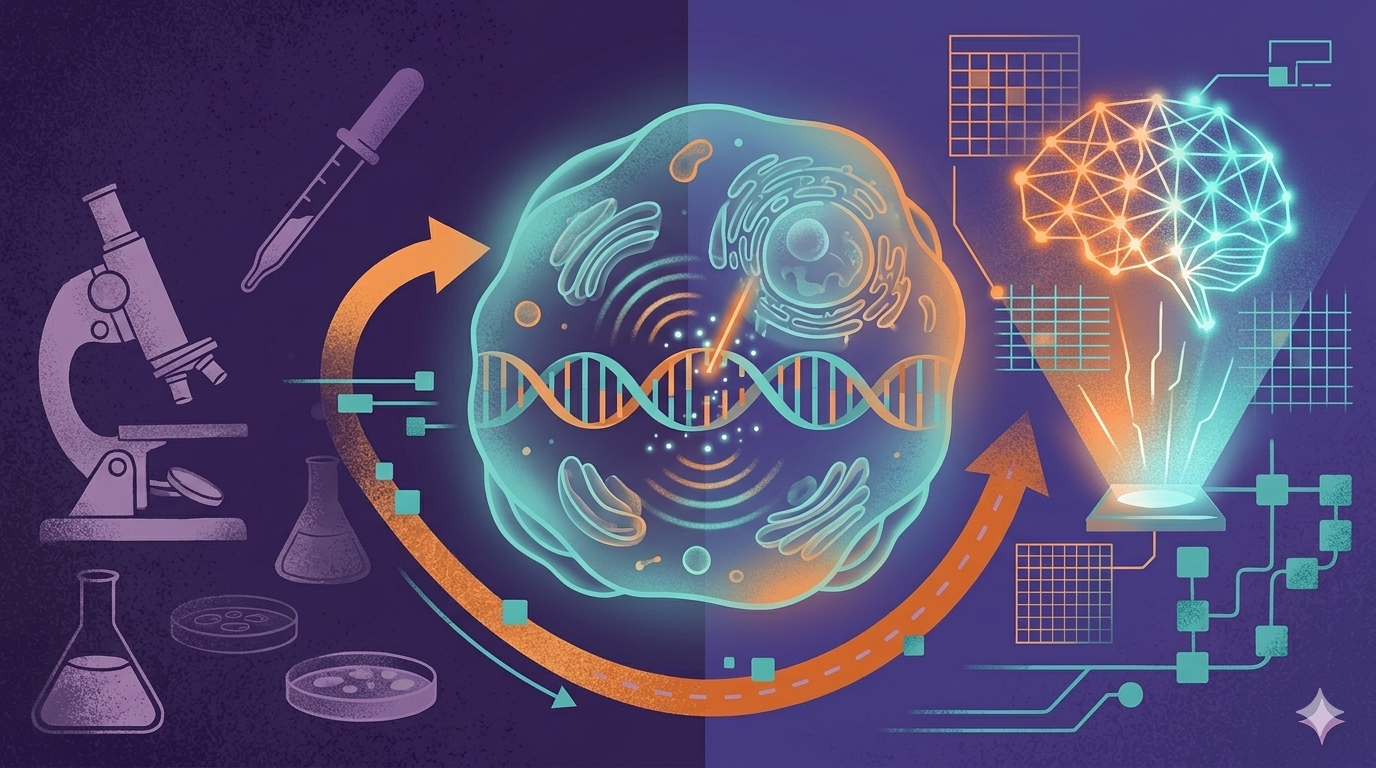

Predicting exactly how a biological system will respond to a new therapeutic is one of the most difficult challenge in precision medicine. At a fundamental level, pharmacological drugs work by intentionally altering a cell’s baseline state- typically most modern drugs (like siRNAs) do not completely erase a gene's function; instead, they knock down its impact, partially reducing its expression or inhibiting a downstream protein.

However, to simulate and study these drug effects during target discovery, scientists often rely on genetic tools like CRISPR to completely knock out (silence) a target gene. This process, known as perturbation- is the foundation of modern target discovery. However, relying on physical laboratory methods is becoming a major roadblock for modern drug development pipelines.

To overcome this, the industry is turning to in-silico perturbation models like Elucidata's El-PERTURB, AI model that allows biotech teams to simulate how cells will respond to genetic edits or drugs entirely computationally by- flagging toxicities and adverse events early and isolating viable targets before you lift single pipette.

While CRISPR-Cas9 revolutionized genetic engineering, depending entirely on physical lab screens for target discovery presents two severe constraints for commercial biotech teams:

Imagine a biotech company developing a novel therapeutic for liver disease. To succeed, they need a deep understanding of hepatocyte (liver cell) biology. The most critical question they face is -

How can we screen our lead compounds to flag toxic downstream side effects early in the program?

Catching toxicity late in clinical trials costs hundreds of millions of dollars. By shifting these screens to an in-silico (computational) environment, researchers can save years of time and entirely bypass the cost of physical assays. The primary challenge to do this successfully is to find an AI model trained on standardized ,tissue-specific cell data.

The push to solve this problem has sparked a massive wave of innovation, leading to several different state-of-the-art (SOTA) virtual cell architectures. The current landscape is dominated by three main approaches:

While these models are great technological achievements, many still rely heavily on immortalized cell lines or struggle when tasked with predicting completely novel biological contexts or newer cell lines ,bringing us to the industry's biggest current bottleneck.

As AI steps in to solve these bottlenecks, the field has seen a surge of virtual cell models. But there is a frustrating problem in the industry right now-

Every time a new AI model is published, it is defined by it’s own evaluation framework. To truly understand how AI is mastering this space, we have to evaluate models against standard metrics pulled from the literature, for example:

When you take current state-of-the-art models and stress-test them outside of their comfort zones, performance often drops. Out-of-distribution (OOD) failure is a systemic challenge across the board.

Models struggle when faced with new cell types or drugs they weren't explicitly trained on.

Interestingly, the solution isn't always a bigger model. Our data-centric approach focus heavily on data curation, harmonization, and contextual richness and has shown that high-quality data engineering can match or even outperform state-of-the-art architectures using up to 5X less in-context training data.

Some real limitations of our current models include:

These limitations are exactly the problems the field is working on next. Transitioning from basic lab cell lines to real patient data, and evolving from extreme genetic knockouts to partial knockdowns, is where the true value of in-silico perturbation prediction will be realized.

Building an AI model that surpasses the State of the Art requires a superior data foundation. We achieve this through two distinct, structural advantages-

Connect with us and Discover how perturbation prediction models can help solve the critical bottlenecks of physical CRISPR screens and transform target discovery